Introduction

An aerospace component emerges from a CNC machine, its machined surfaces gleaming under inspection lights. Visually, it appears flawless. Yet under operational stress at altitude, the part fails catastrophically — not because the material was defective, but because a critical dimension was off by just 0.003 inches. That margin of error — invisible to the eye — is why precision measurement sits at the core of high-stakes manufacturing, not at the end of it.

In aerospace, defense, and medical device manufacturing, measurement errors produce safety failures, regulatory non-compliance, and costly rework. According to ASQ and APQC benchmarking data, cost of poor quality runs 10–15% of total revenue for aerospace manufacturers, with scrap rates averaging 2–3% in high-mix environments.

A significant share of those losses traces back to measurement system failures — not material defects or machine errors.

What follows covers the fundamentals: what precision measurement means, how it differs from accuracy, which tools and systems industrial settings rely on, and how ISO 9001:2015 and AS9100D standards govern measurement practice in certified shops.

TLDR

- Precision measurement quantifies physical properties with repeatable accuracy using calibrated instruments and standardized methods

- Accuracy and precision are independent: one measures closeness to true value, the other measures repeatability

- Key tools include calipers, micrometers, CMMs, and gauge blocks, selected based on required tolerance

- Errors stem from instrument calibration drift, thermal expansion, operator variability, and surface irregularities

- ISO 9001:2015 and AS9100D require traceable calibration and measurement system control for aerospace compliance

What Is Precision Measurement?

Precision measurement is the practice of determining a physical quantity — length, diameter, flatness, angle, or surface finish — with a controlled, verifiable degree of exactness, using calibrated instruments and documented methods. Unlike casual measurement with a tape measure, precision measurement requires instrument selection, environmental controls, and uncertainty quantification.

Understanding Measurement Resolution

The concept of resolution defines the smallest increment a measuring instrument can detect. A standard ruler resolves to the nearest millimeter, while a digital micrometer resolves to 0.001 mm (1 micron). For example:

- Standard ruler: Resolution = 1 mm

- Digital caliper (Mitutoyo AOS CD-AX): Resolution = 0.01 mm, accuracy ± 0.02 mm

- High-accuracy digital micrometer (Mitutoyo MDH-25MB): Resolution = 0.0001 mm, accuracy ± 0.5 µm

Instrument choice directly determines achievable precision. Measuring a shaft diameter specified as 24.600 mm ± 0.005 mm with a standard ruler would be impossible; a digital micrometer with 0.001 mm resolution is the minimum appropriate tool.

Measurement Uncertainty: Acknowledging Limits

All measurements carry some degree of uncertainty — a quantified acknowledgment of measurement limits. Reporting a measurement as "24.6 mm ± 0.3 mm" communicates that the true value lies within that range with a defined confidence level. According to JCGM 100:2008 (the Guide to the Expression of Uncertainty in Measurement), uncertainty is "a parameter, associated with the result of a measurement, that characterizes the dispersion of the values that could reasonably be attributed to the measurand."

Uncertainty is not a flaw. It is a professional acknowledgment that no measurement is perfect, and responsible reporting includes this range.

Where Precision Measurement Appears in Manufacturing

Precision measurement is not a single inspection step but an ongoing discipline embedded throughout the manufacturing workflow:

- Incoming material inspection: Verifying raw material dimensions and certifications before machining

- In-process quality checks: Monitoring dimensions during CNC machining to detect drift before producing scrap

- Final part verification: Comprehensive dimensional inspection before delivery to confirm conformance to engineering drawings

For example, DM&E — an AS9100D-certified precision manufacturer — integrates measurement at all stages. This includes coordinate measuring machines (CMMs), certified granite tables, and First Article Inspection (FAI) reporting to confirm aerospace and defense components meet tolerances ranging from ±0.005 to ±0.0005 inches.

That level of embedded measurement discipline directly determines whether finished parts pass or fail — which brings into focus why the stakes are so high.

Why Precision Measurement Matters in Aerospace and Defense

In aerospace, defense, and robotics, tight tolerances are defined by engineering drawings and regulatory requirements.

Deviation from those tolerances can lead to:

- Part rejection and rework costs: Scrap rates of 2–3% are typical in high-mix aerospace manufacturing, with cost of poor quality running at 10–15% of total revenue

- Safety failures: Critical components operating under stress, vibration, or extreme temperatures must meet exact specifications

- Regulatory non-compliance: AS9100D and FAI requirements mandate documented measurement traceability

Human error accounts for over 50% of manufacturing defects in complex aerospace environments — which is precisely why structured measurement systems, not individual judgment, are the standard for reducing variability and catching deviations early.

Accuracy vs. Precision: Understanding the Difference

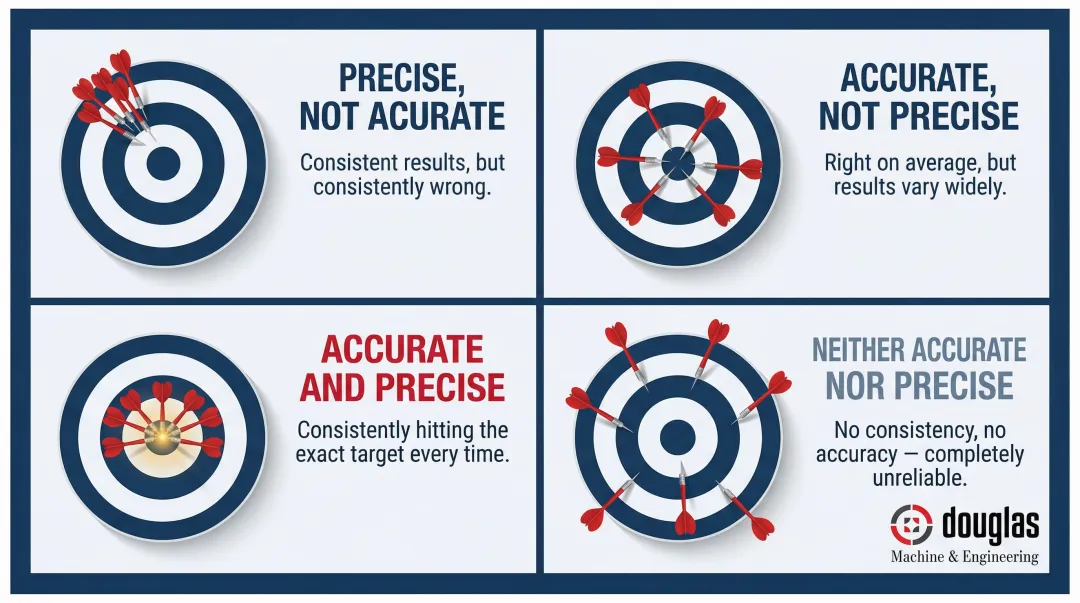

Accuracy is how close a measured value is to the true or accepted reference value. Precision is how consistently a set of repeated measurements cluster together — regardless of whether they are close to the true value. These are independent properties: a measurement system can be precise but inaccurate, accurate but imprecise, both, or neither.

The Dartboard Analogy

- Darts clustered together but far from the bullseye: Precise but not accurate

- Darts spread around the center: Accurate on average but not precise

- Darts clustered on the bullseye: Both accurate and precise

- Darts scattered randomly: Neither accurate nor precise

In manufacturing: a CNC machine consistently cutting parts 0.005 inches too short is precise but inaccurate. The fix requires calibration (correcting systematic error), not reducing variability.

Systematic vs. Random Errors

Systematic errors produce consistent inaccuracy and affect accuracy:

- Miscalibrated measuring tool (e.g., a micrometer reading 0.002 mm high)

- Thermal expansion of a workpiece measured at 25°C instead of the standard 20°C reference temperature

- Worn machine tool spindle causing consistent dimensional bias

Random errors produce inconsistent scatter and affect precision:

- Operator variation in contact pressure when using handheld calipers

- Surface irregularities on the part being measured

- Environmental vibration during measurement

The corrective path depends on the error type. Systematic errors require calibration or environmental control; random errors call for improved technique, better fixturing, or more capable instruments. Understanding which type you're dealing with shapes everything from how you diagnose a measurement problem to which standard applies.

ISO 5725: The Formal Framework

ISO 5725-1:2023 formally defines accuracy as comprising two components:

- Trueness: Closeness of the mean result to the true value (systematic error)

- Precision: Repeatability and reproducibility (random error)

This definition underpins measurement quality assurance in manufacturing and shapes how measurement system analysis (MSA) studies are conducted.

Repeatability vs. Reproducibility

Repeatability is consistency when the same operator uses the same instrument under the same conditions. Reproducibility is consistency when different operators, instruments, or conditions are used.

Both matter in production environments where multiple operators handle the same measurement task.

A system can show excellent repeatability — one operator gets consistent results — yet poor reproducibility when different operators take over. That gap points to a training or technique issue, not an instrument problem.

Key Precision Measurement Systems and Tools

Precision measurement instruments range from manual handheld tools to automated digital systems. Selection depends on the required tolerance, geometry complexity, and production volume.

Handheld Measurement Tools

Calipers and Micrometers

Calipers (vernier, dial, and digital) and micrometers are the most common handheld precision tools in machine shops.

- Calipers: Measure outside diameter (OD), inside diameter (ID), depth, and step height. Digital calipers typically resolve to 0.01 mm with accuracy ± 0.02 mm

- Micrometers: Measure OD with higher precision. High-accuracy digital micrometers resolve to 0.0001 mm (0.1 µm) with accuracy ± 0.5 µm

Proper technique directly affects reliability:

- Apply consistent contact pressure (over-tightening introduces error)

- Allow thermal equilibration (measure parts at standard reference temperature)

- Verify zero setting before use

Gauge Blocks (Slip Gauges)

Gauge blocks are the precision reference standard used to calibrate and verify other instruments. They are ground and lapped to specific tolerances and provide traceability to national measurement standards like NIST.

Per ASME B89.1.9-2002, gauge block grades define tolerance levels:

- Grade 0.5: ± 0.03 µm for blocks under 10 mm

- Grade 1: ± 0.05 µm for blocks under 10 mm

Gauge blocks enable calibration verification without sending instruments offsite, supporting daily quality checks in temperature-controlled inspection rooms.

When handheld tools reach their limits — complex geometries, tight form tolerances, high-volume inspection — coordinate measuring machines take over.

Coordinate Measuring Machines (CMMs)

CMMs are the workhorses of dimensional inspection in precision manufacturing. They use a probe to physically contact a workpiece surface at programmed points and compute geometry, including flatness, roundness, true position, and perpendicularity, in three-dimensional space.

Why CMMs Matter:

- Measure complex geometries that handheld tools cannot (e.g., true position of hole patterns, form tolerances)

- Automate inspection routines for repeatability

- Generate detailed inspection reports for FAI and quality documentation

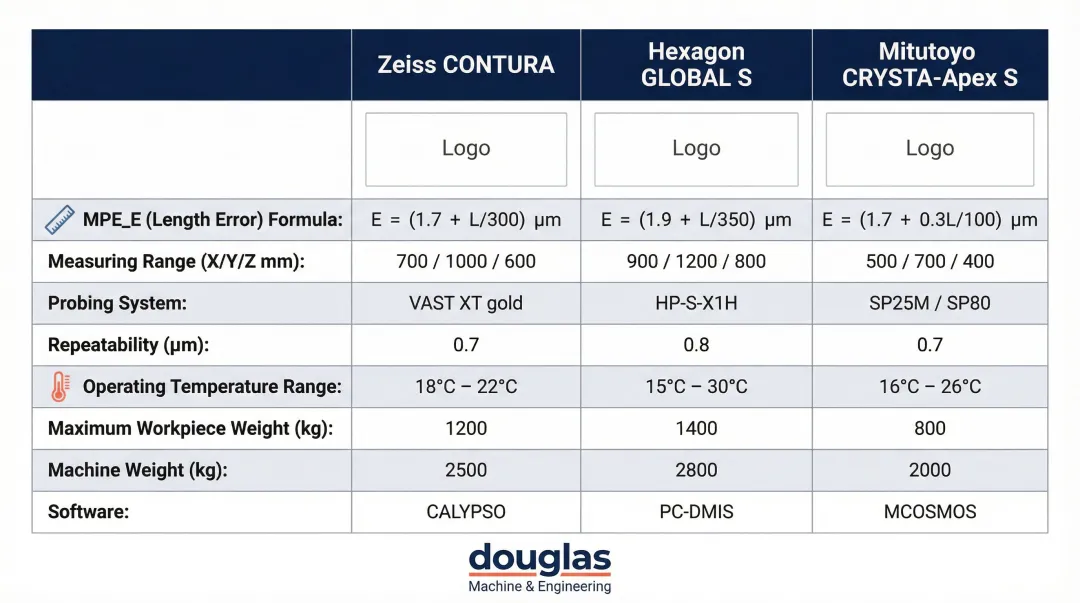

CMM Accuracy and Environmental Controls

CMM accuracy depends on machine calibration, environmental controls, and probe qualification. Industrial CMMs typically specify volumetric length measuring error ($MPE_E$) per ISO 10360-2:

| Manufacturer | Model | $MPE_E$ (Length Error) | Temperature Range |

|---|---|---|---|

| Zeiss | CONTURA | 1.5 + L/350 µm | 18–22°C |

| Hexagon | GLOBAL S | 1.4 + L/333 µm | 18–22°C |

| Mitutoyo | CRYSTA-Apex S | 1.7 + 3L/1000 µm | 20 ± 2°C |

Temperature-controlled rooms (typically 20°C ± 2°C) are standard for CMM installations to minimize thermal expansion errors. DM&E's AS9100D-certified inspection process incorporates CMM analysis through Exact Metrology to verify aerospace components with tolerances as tight as ±0.0005 inches, maintaining full measurement traceability as required by the standard.

Surface and Form Measurement Systems

Surface Roughness Testers (Profilometers)

Surface finish is a precision measurement in its own right, critical in aerospace sealing surfaces, medical devices, and hydraulic components. Ra (Roughness Average) is the arithmetic average of profile height deviations, commonly specified on engineering drawings per ASME B46.1-2019 and ISO 4287.

| Ra Value (µm) | Ra Value (µin) | Typical Application | Typical Process |

|---|---|---|---|

| 0.4 | 16 | Sealing surfaces, precision bores | Grinding, honing, lapping |

| 0.8 | 32 | Bearing surfaces, mating faces | Fine turning, grinding |

| 1.6 | 63 | General machined surfaces | Standard CNC milling/turning |

| 3.2 | 125 | Non-critical surfaces | Standard machining |

Roundness and Form Measuring Systems

These systems measure geometric deviations such as roundness, cylindricity, and concentricity. Each parameter carries real performance consequences: out-of-round bores accelerate bearing wear, poor cylindricity causes hydraulic leakage, and concentricity errors create vibration in rotating assemblies. Dedicated form measurement equipment resolves deviations down to 0.01 µm, well beyond the capability of CMMs for these specific characteristics.

What Causes Measurement Error?

Measurement errors arise from four primary categories: instrument-related factors, environmental conditions, operator technique, and workpiece characteristics.

1. Instrument-Related Errors

- Poor calibration: Instruments drift over time; uncalibrated tools produce systematic error

- Resolution limits: Measuring a 0.001-inch tolerance with a 0.01-inch resolution tool is inadequate

- Mechanical wear: Worn anvils, damaged probes, or loose components introduce variability

2. Environmental Factors

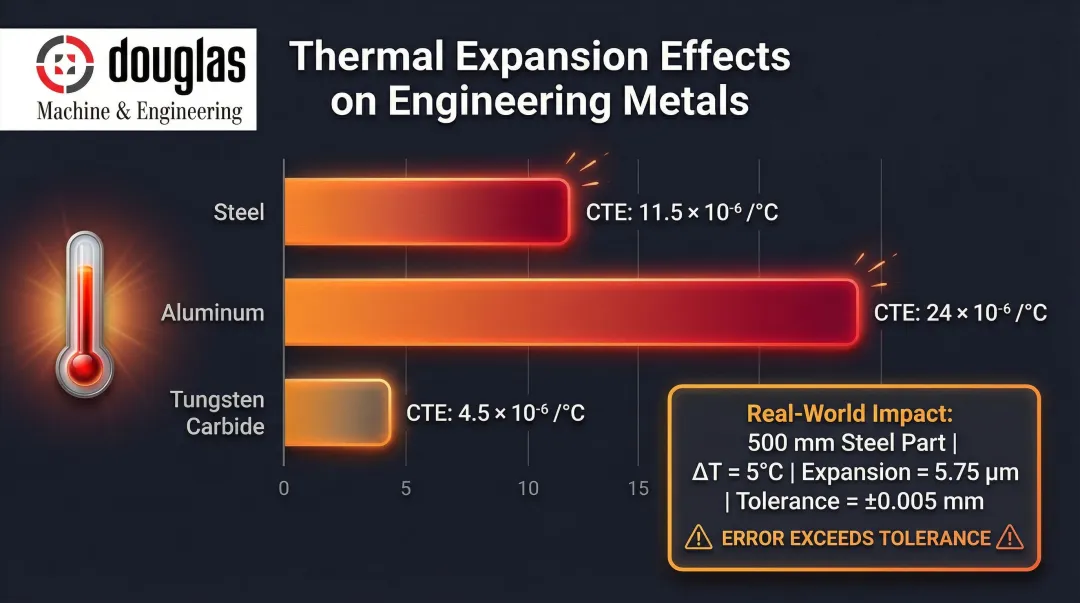

Temperature and Thermal Expansion

Temperature drives more dimensional error than most shops expect. Metals expand and contract with temperature changes — measurements taken on a hot part fresh off a CNC machine will differ from those taken at the standard reference temperature.

ISO 1:2022 mandates 20°C (68°F) as the standard reference temperature for geometrical and dimensional properties. Deviations require thermal compensation.

Thermal expansion coefficients (CTE):

- Steel: 11.5 × 10⁻⁶ /°C

- Aluminum: 24 × 10⁻⁶ /°C

- Tungsten carbide: 4.5 × 10⁻⁶ /°C

These coefficients translate directly to real inspection risk. A 500 mm steel part grows 1.15 µm per 1°C temperature increase. For a part specified at 500.000 mm ± 0.005 mm, a 5°C swing introduces 5.75 µm (0.0058 mm) of error — enough to push a conforming part out of tolerance.

That's why aerospace and precision manufacturing environments routinely maintain temperature-controlled inspection rooms at 20°C.

Other Environmental Factors:

- Vibration: Machine tools, forklifts, or nearby equipment can affect CMM accuracy

- Humidity: Affects air density and can cause corrosion on precision surfaces

3. Operator-Related Errors

- Inconsistent technique: Varying contact pressure when using micrometers

- Parallax errors: Misreading dial indicators from an angle

- Reading errors: Misinterpreting digital displays or vernier scales

4. Workpiece-Related Factors

- Surface irregularities: Burrs, scratches, or rough finishes affect contact measurement

- Part temperature: Hot parts from machining must cool to 20°C before measurement

- Fixturing: Improper clamping can distort flexible parts

Measurement System Analysis (MSA) and Gauge R&R

A Measurement System Analysis (MSA) — including Gauge Repeatability and Reproducibility (GR&R) studies — quantifies how much variation in measurement results comes from the measurement system itself versus actual part variation.

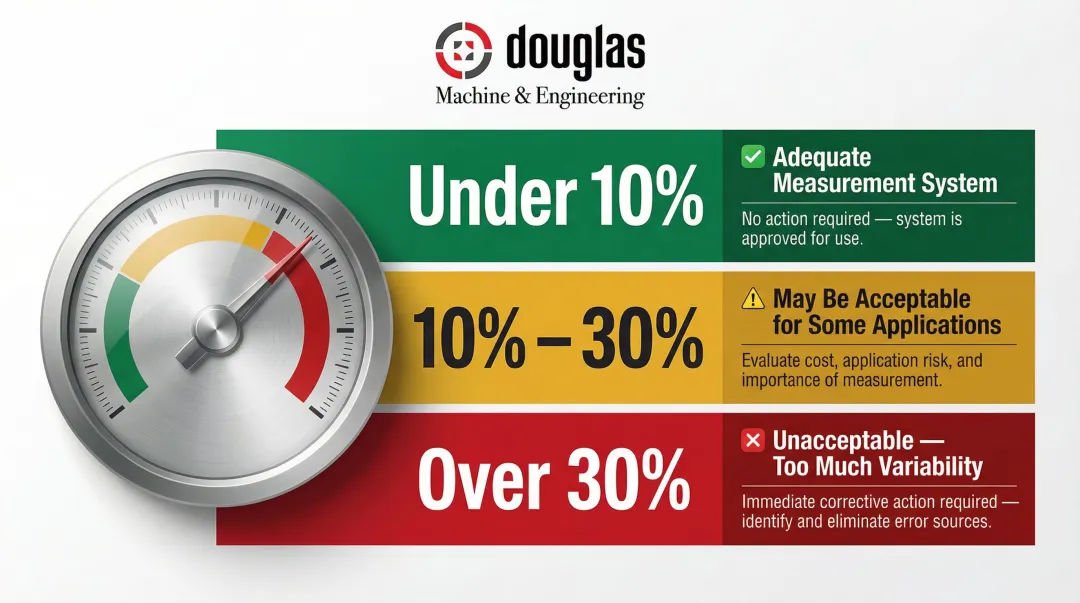

GR&R Acceptance Thresholds (AIAG MSA-4):

- Under 10%: Adequate measurement system

- 10% to 30%: May be acceptable for some applications

- Over 30%: Unacceptable — measurement system contributes too much variability

MSA studies identify whether measurement problems stem from the instrument (repeatability) or the operator (reproducibility) — giving teams a clear target for corrective action.

How Quality Standards Govern Precision Measurement

ISO 9001:2015 Clause 7.1.5: Monitoring and Measuring Resources

ISO 9001:2015 requires organizations to identify, control, and calibrate all monitoring and measurement equipment used to verify product conformance. Every caliper, micrometer, and CMM in a certified facility must have:

- Documented calibration history

- Traceability to recognized standards (e.g., NIST)

- Calibration status identification

- Safeguards against unauthorized adjustments or damage

Documented information must be retained as evidence of fitness for purpose.

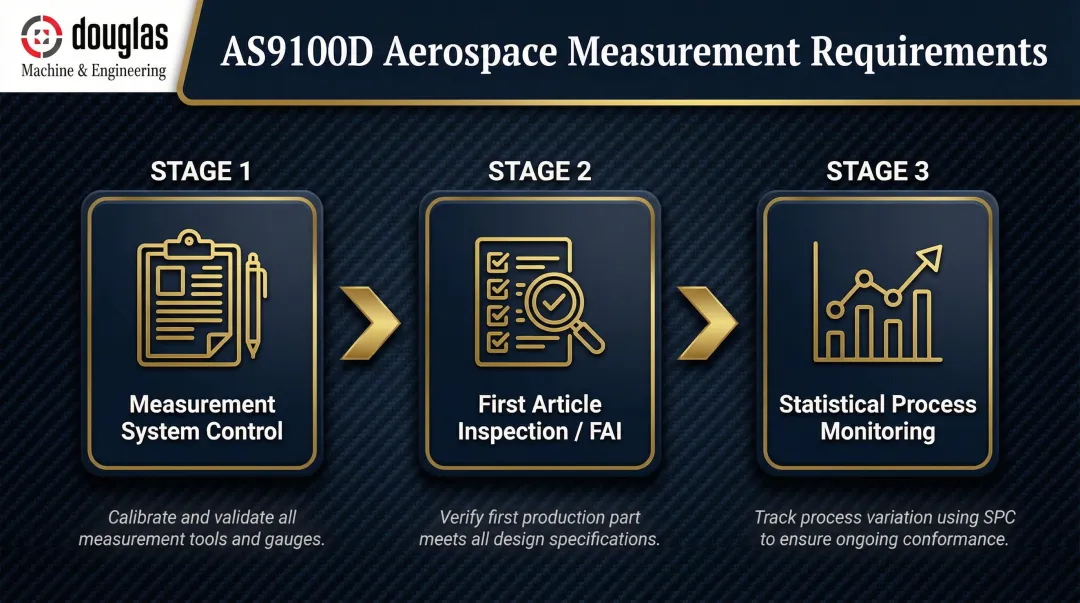

AS9100D: Aerospace-Specific Measurement Requirements

AS9100D builds on ISO 9001 with additional requirements for aerospace and defense manufacturing:

- Measurement system control: Register of monitoring and measuring equipment

- First Article Inspection (FAI): Documented verification per AS9102 when products or processes change

- Statistical process monitoring: Use of statistical techniques for process control

Suppliers to aerospace and defense primes (Boeing, Lockheed Martin, Raytheon) must hold AS9100D certification, so rigorous measurement practices become a contractual and regulatory obligation.

For example, DM&E maintains both ISO 9001:2015 and AS9100D certifications, embedding measurement controls into every production program. Inspection services cover FAI reporting, CMM analysis, and dimensional verification on certified granite tables, supporting full traceability from raw material to finished part.

NIST Traceability

Calibration standards must be traceable back to national or international measurement standards. NIST (National Institute of Standards and Technology) assures traceability of measurement results it provides directly; other organizations must establish their own traceability to NIST or other specified references.

In practice, NIST traceability means a measurement taken at one facility carries the same meaning as the same measurement taken anywhere else in the supply chain. For global aerospace programs — where components are manufactured and assembled across multiple continents — that consistency is non-negotiable.

Key elements that establish NIST traceability include:

- Calibration certificates referencing an unbroken chain to NIST standards

- Known and documented measurement uncertainty at each step

- Calibrated reference standards maintained at controlled intervals

Frequently Asked Questions

What is a precision measurement?

Precision measurement is the process of quantifying a physical property — such as a part dimension or surface finish — using calibrated instruments and controlled methods that minimize variability and document uncertainty. It differs from casual measurement by requiring instrument selection, environmental controls, and traceability.

What is an example of precision?

A CNC-machined shaft diameter measured ten times with a digital micrometer, with all readings falling within 0.001 mm of each other, demonstrates high precision. The measurements are tightly clustered and repeatable, indicating low random error.

What is the difference between accuracy and precision?

Accuracy is how close a measurement is to the true value; precision is how repeatable measurements are. A measurement system can be precise (consistent) but inaccurate (consistently wrong), or accurate on average but imprecise (scattered results).

What tools are used for precision measurement in manufacturing?

Common instruments include calipers (0.01 mm resolution), micrometers (0.001 mm resolution), gauge blocks, coordinate measuring machines (CMMs), and surface profilometers. Tool selection depends on required tolerance and feature geometry.

What causes measurement errors in precision manufacturing?

Main sources include instrument calibration drift, temperature-induced dimensional changes (thermal expansion), inconsistent operator technique, and workpiece surface irregularities. All can be controlled through MSA studies, calibrated measurement environments, and rigorous training.

Why does precision measurement matter in aerospace and defense manufacturing?

In aerospace and defense, components must conform to tight engineering tolerances defined by regulatory and safety requirements. Measurement errors result in non-conforming parts, rework costs (2–3% scrap rates are typical), or safety failures in critical applications. AS9100D certification requires documented, reliable measurement systems at every stage of production.